Suppose someone—let’s call her Alice—has a book of secrets she wants to destroy so she tosses it into a handy black hole. Given that black holes are nature’s fastest scramblers, acting like giant garbage shredders, Alice’s secrets must be pretty safe, right?

Now suppose her nemesis, Bob, has a quantum computer that’s entangled with the black hole. (In entangled quantum systems, actions performed on one particle similarly affect their entangled partners, regardless of distance or even if some disappear into a black hole.)

A famous thought experiment by Patrick Hayden and John Preskill says Bob can observe a few particles of light that leak from the edges of a black hole. Then Bob can run those photons as qubits (the basic processing unit of quantum computing) through the gates of his quantum computer to reveal the particular physics that jumbled Alice’s text. From that, he can reconstruct the book.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

But not so fast.

Our recent work on quantum machine learning suggests Alice’s book might be gone forever, after all.

QUANTUM COMPUTERS TO STUDY QUANTUM MECHANICS

Alice might never have the chance to hide her secrets in a black hole. Still, our new no-go theorem about information scrambling has real-world application to understanding random and chaotic systems in the rapidly expanding fields of quantum machine learning, quantum thermodynamics, and quantum information science.

Richard Feynman, one of the great physicists of the 20th century, launched the field of quantum computing in a 1981 speech, when he proposed developing quantum computers as the natural platform to simulate quantum systems. They are notoriously difficult to study otherwise.

Our team at Los Alamos National Laboratory, along with other collaborators, has focused on studying algorithms for quantum computers and, in particular, machine-learning algorithms—what some like to call artificial intelligence. The research sheds light on what sorts of algorithms will do real work on existing noisy, intermediate-scale quantum computers and on unresolved questions in quantum mechanics at large.

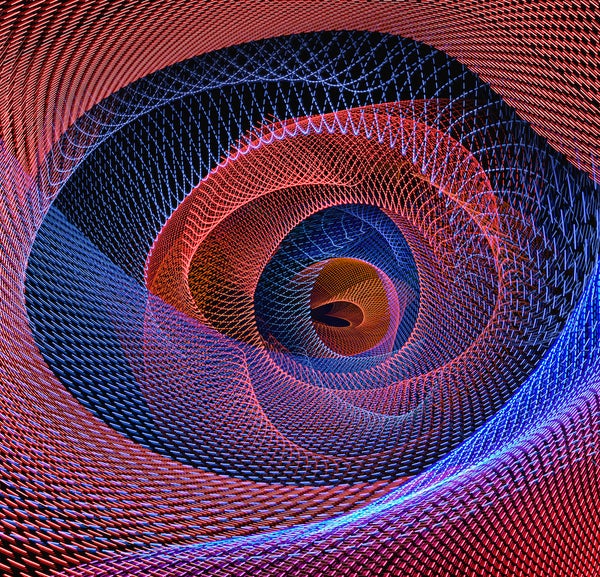

In particular, we have been studying the training of variational quantum algorithms. They set up a problem-solving landscape where the peaks represent the high-energy (undesirable) points of the system, or problem, and the valleys are the low-energy (desirable) values. To find the solution, the algorithm works its way through a mathematical landscape, examining its features one at a time. The answer lies in the deepest valley.

ENTANGLEMENT LEADS TO SCRAMBLING

We wondered if we could apply quantum machine learning to understand scrambling. This quantum phenomenon happens when entanglement grows in a system made of many particles or atoms. Think of the initial conditions of this system as a kind of information—Alice’s book, for instance. As the entanglement among particles within the quantum system grows, the information spreads widely; this scrambling of information is key to understanding quantum chaos, quantum information science, random circuits and a range of other topics.

A black hole is the ultimate scrambler. By exploring it with a variational quantum algorithm on a theoretical quantum computer entangled with the black hole, we could probe the scalability and applicability of quantum machine learning. We could also learn something new about quantum systems generally. Our idea was to use a variational quantum algorithm that would exploit the leaked photons to learn about the dynamics of the black hole. The approach would be an optimization procedure—again, searching through the mathematical landscape to find the lowest point.

If we found it, we would reveal the dynamics inside the black hole. Bob could use that information to crack the scrambler’s code and reconstruct Alice’s book.

Now here’s the rub. The Hayden-Preskill thought experiment assumes Bob can determine the black hole dynamics that are scrambling the information. Instead, we found that the very nature of scrambling prevents Bob from learning those dynamics.

STALLED OUT ON A BARREN PLATEAU

Here’s why: the algorithm stalled out on a barren plateau, which, in machine learning, is as grim as it sounds. During machine-learning training, a barren plateau represents a problem-solving space that is entirely flat as far as the algorithm can see. In this featureless landscape, the algorithm can’t find the downward slope; there’s no clear path to the energy minimum. The algorithm just spins its wheels, unable to learn anything new. It fails to find the solution.

Our resulting no-go theorem says that any quantum machine-learning strategy will encounter the dreaded barren plateau when applied to an unknown scrambling process.

The good news is, most physical processes are not as complex as black holes, and we often will have prior knowledge of their dynamics, so the no-go theorem doesn’t condemn quantum machine learning. We just need to carefully pick the problems we apply it to. And we’re not likely to need quantum machine learning to peer inside a black hole to learn about Alice’s book—or anything else—anytime soon.

So, Alice can rest assured that her secrets are safe, after all.

This is an opinion and analysis article, and the views expressed by the author or authors are not necessarily those of Scientific American.