Using ChatGPT to help you write is like donning a pair of high heels. The reason, according to one postgraduate researcher from China: “It makes my writing look noble and elegant, although I occasionally fall flat on my face in the academic world.”

This comparison came from a participant in a recent study of students who have adopted generative artificial intelligence in their work. Researchers asked international students completing postgraduate studies in the U.K. to explain AI’s role in their writing using a metaphor.

The responses were creative and diverse: AI was said to be a spaceship, a mirror, a performance-enhancing drug, a self-driving car, makeup, a bridge or fast food. Two people compared generative AI with Spider-Man, another with the magical Marauders’ Map from Harry Potter. These comparisons reveal how adopters of this technology are feeling its impact on their work during a time when institutions are struggling to draw lines around which uses are ethical and which are not.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

“Generative AI has transformed education dramatically,” says senior study author Chin-Hsi Lin, an education technology researcher at the University of Hong Kong. “We want students to be able to express their ideas” about how they’re using it and how they feel about it, he says.

Lin and his colleagues recruited postgraduate students from 14 regions, including countries such as China, Pakistan, France, Nigeria and the U.S., who were studying in the U.K. and used ChatGPT-4 in their work, which was only available to paid subscribers at the time. The students were asked to come up with and explain a metaphor for the way generative AI impacts their academic writing. To check that the 277 metaphors in the participants’ responses were true to their actual use of the technology, the researchers conducted in-depth interviews with 24 of the students and asked them to provide screenshots of their interactions with AI.

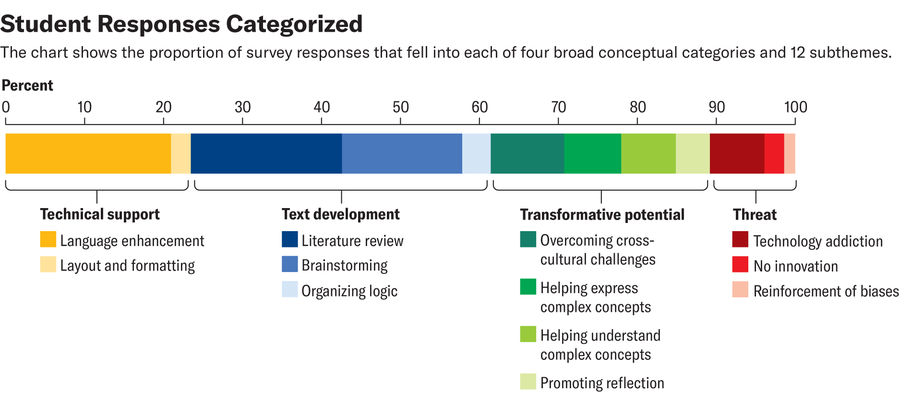

By analyzing the responses, the researchers found four categories for how students were using and thinking about AI in their work. The most basic of these was technical support: the use of AI to check English grammar or format a reference list. Participants likened AI to aesthetic enhancements such as makeup or high heels, a human role such as a language tutor or editor, or a mechanical tool such as a packaging machine or measuring tape.

In the next category, text development, generative AI was more involved in the writing process itself. Some students used it to organize the logic of their writing; one person equated it to Tesla’s Autopilot because it helped them stay on track. Others used it to help with their literature review and likened it to an assistant—a common metaphor used in AI marketing—or a personal shopper. And students that used the chatbot to help brainstorm often used metaphors that described the technology as a guide. They called it a compass, a companion, a bus driver or a magic map.

In the third category, students used AI to more meaningfully transform their writing process and final product. Here, they called the technology a “bridge” or a “teacher” that could help them overcome cross-cultural boundaries in communication styles—especially important because academic writing is so often done in English. Eight people described it as a mind reader because, to quote one participant, it helped express “those deeply nuanced concepts that are hard to articulate.”

Others said it helped them actually understand those difficult concepts, especially by pulling from different disciplines. Three people likened it to a spaceship and two to Spider-Man—“because it can swiftly navigate through the complex web of academic information” across disciplines.

In the fourth category, students’ metaphors highlighted the potential hazards of AI. Some of the participants expressed discomfort with the way it enables a lack of innovation (like a painter that just copies others’ work) or a lack of deeper understanding (like fast food, convenient but not nutritious). In this category, students most commonly called it a drug—especially an addictive one. One particularly apt response compared it to steroids in sports: “in a competitive environment, no one wants to fall behind because they do not use it.”

Amanda Montañez; Source: “High Heels, Compass, Spider-Man or Drug? Metaphor Analysis of Generative Artificial Intelligence in Academic Writing,” by Fangzhou Jin et al., in Computers & Education, Vol. 228; April 2025 (data)

“Metaphors really matter, and they have shaped the public discourse” for all kinds of new technologies, says Emily Weinstein, a technology researcher at Harvard University’s Center for Digital Thriving, who was not involved in the new study. The comparisons we use to talk about new technology can reveal our assumptions about how they work—and even our blind spots.

For example, “there are threats implicit in the other metaphors that are here,” she says. Driver-assistance systems sometimes cause a crash. A fantasy world’s mind readers or magic maps can’t be explained by science but merely have to be trusted. And high heels, as the participant highlighted, can make you more likely to fall on your face.

There’s never only one right metaphor to talk about a new technology, Weinstein says. For example, drug or cigarette metaphors are very common when people talk about social media, and in some ways, they’re apt. Apps like TikTok and Instagram can be genuinely addicting and are often targeted at teens. But when we try to assign just one metaphor to a new technology, we risk flattening it and overlooking both its benefits and dangers.

“If your mental model of social media is that it’s crack [cocaine], it’s going to be hard for us to have a conversation about moderating use, for example,” she says.

And culturally, our mental models of generative AI are still seriously lacking. “The problem is that right now we’re missing ways to talk about the details. There’s so much moral panic and reaction,” she says. But “I think a lot of the stuff that gives us this moral, emotional reaction ... has to do with us not having language or ways to talk more specifically” about what we want from this technology.

Creating this new language will require more listening and classroom discussion—perhaps even on a per-assignment basis. This can relieve pressure on teachers to understand every potential use of AI and make sure students aren’t left standing in a gray area without guidance. For certain tasks, teachers and advisers might want to allow students to use generative AI as a compass for brainstorming or as the Spider-Man to their Gwen Stacy to help them swing across the World Wide Web.

“There are different learning goals for different assignments and different contexts,” Weinstein says. “And sometimes your goal might not actually be in tension with a more transformative use.”