Stroke, amyotrophic lateral sclerosis and other medical conditions can rob people of their ability to speak. Their communication is limited to the speed at which they can move a cursor with their eyes (just eight to 10 words per minute), in contrast with the natural spoken pace of 120 to 150 words per minute. Now, although still a long way from restoring natural speech, researchers at the University of California, San Francisco, have generated intelligible sentences from the thoughts of people without speech difficulties.

The work provides a proof of principle that it should one day be possible to turn imagined words into understandable, real-time speech circumventing the vocal machinery, Edward Chang, a neurosurgeon at U.C.S.F. and co-author of the study published in April in Nature, said in a news conference. “Very few of us have any real idea of what’s going on in our mouth when we speak,” he said. “The brain translates those thoughts of what you want to say into movements of the vocal tract, and that’s what we want to decode.”

But Chang cautions that the technology, which has only been tested on people with typical speech, might be much harder to make work in those who cannot speak—and particularly in people who have never been able to speak because of a movement disorder such as cerebral palsy.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

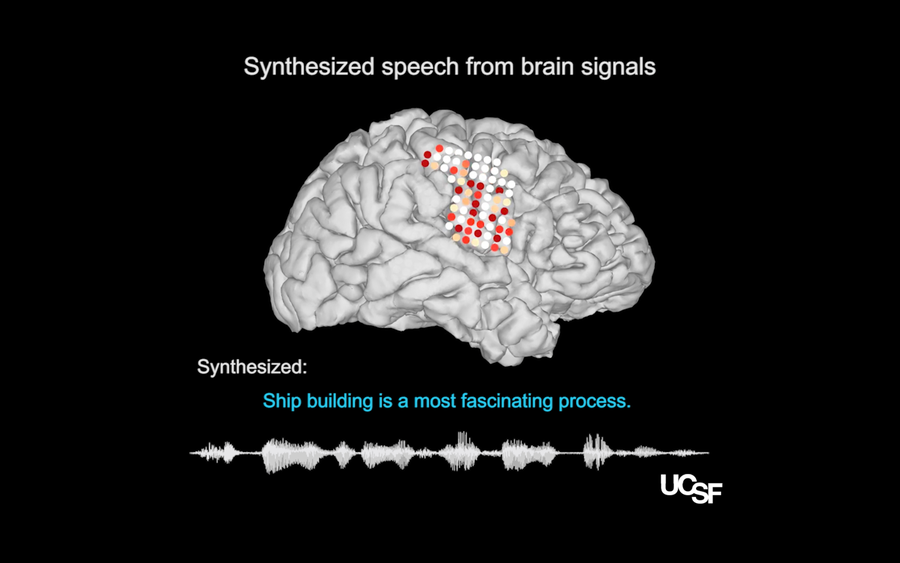

Illustrations of electrode placements on the research participants’ neural speech centers, from which activity patterns recorded during speech (colored dots) were translated into a computer simulation of the participant’s vocal tract (model, right) which then could be synthesized to reconstruct the sentence that had been spoken (sound wave & sentence, below). Credit: Chang lab and the UCSF Dept. of Neurosurgery

Chang also emphasized that his approach cannot be used to read someone’s mind—only to translate words the person wants to say into audible sounds. “Other researchers have tried to look at whether or not it’s actually possible to decode essentially just thoughts alone,” he says.* “It turns out it’s a very difficult and challenging problem. That’s only one reason of many that we focus on what people are trying to say.”

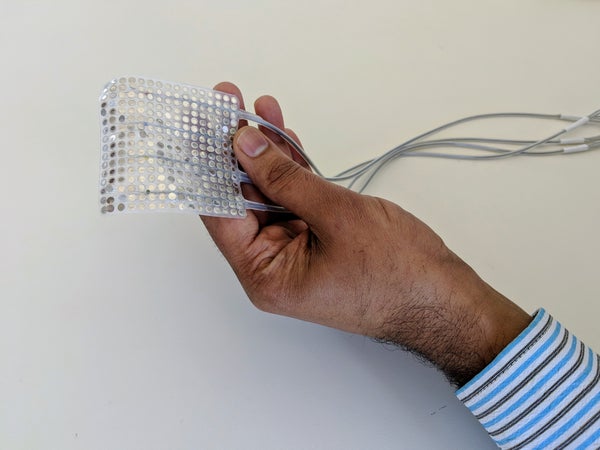

Chang and his colleagues devised a two-step method for translating thoughts into speech. First, in tests with epilepsy patients whose neural activity was being measured with electrodes on the surface of their brain, the researchers recorded signals from brain areas that control the tongue, lips and throat muscles. Later, using deep-learning computer algorithms trained on naturally spoken words, they translated those movements into audible sentences.

At this point, a decoding system would have to be trained on each person’s brain, but the translation into sounds can be generalized across people, said co-author Gopala Anumanchipalli, also of U.C.S.F. “Neural activity is not one-on-one transferrable across subjects, butthe representations underneath are shareable, and that’s what our paper explores,” he said.

The researchers asked native English speakers on Amazon’s Mechanical Turk crowdsourcing marketplace to transcribe the sentences they heard. The listeners accurately heard the sentences 43 percent of the time when given a set of 25 possible words to choose from, and 21 percent of the time when given 50 words, the study found.

Although the accuracy rate remains low, it would be good enough to make a meaningful difference to a “locked-in” person, who is almost completely paralyzed and unable to speak, the researchers say. “For someone who’s locked in and can’t communicate at all, a few minor errors would be acceptable,” says Marc Slutzky, a neurologist and neural engineer at the Northwestern University Feinberg School of Medicine, who has published related research but was not involved in the new study. “Even a few hundred words would be a huge improvement,” he says. “Obviously you’d want to [be able to] say any word you’d want to, but it would still be a lot better than having to type out words one letter at a time, which is the [current] state of the art.”

Even when the volunteers did not hear the sentences entirely accurately, the phrases were often similar in meaning to those that were silently spoken. For example, “rabbit” was heard as “rodent,” Josh Chartier of U.C.S.F., another co-author of the study, said at the news conference. Sounds like the “sh” in “ship” were decoded particularly well, whereas sounds like “th” in “the” were especially challenging, Chartier added.

Several other research groups in the United States and elsewhere are also making significant advances in decoding speech, but the new study marks the first time that full sentences have been correctly interpreted, according to Slutzky and other scientists not involved in the work.

“I think this paper is an example of the power that can come from thinking about how to harnessboth the biology and the power of machine learning,” says Leigh Hochberg, a neurologist at Massachusetts General Hospital in Boston, and a neuroscientist at Brown University and Providence VA Medical Center. Hochberg was not involved in the work.

The study is generating excitement in the field, but researchers say the technology is not yet ready for clinical trials. “Within the next 10 years, I think that we’ll be seeing systems that will improve people’s ability to communicate,” says Jaimie Henderson, a professor of neurosurgery at Stanford University, who was not involved in the new study. He says the remaining challenges include determining whether using finer-grained analysis of brain activity will improve speech decoding; developing a device that can be implanted in the brain and can decode speech in real time; and extending the benefits to people who cannot speak at all (whose brains have not been primed to talk).

Hochberg says he is reminded of what is at stake in this kind of research “every time I’m in the neurointensive care unit and I see somebody who may have been walking and talking without difficulty yesterday, but who had a stroke and now can no longer can either move or speak.” Although he would love for the work to move faster, Hochberg says he is pleased with field’s progress. “I think brain-computer interfaces will have a lot of opportunity to help people, and hopefully, to help people quickly.”

*Editor’s Note (April 24, 2019): This quote has been updated. Chang clarified his original statement to specify that his lab has not attempted to decode thoughts alone.