On New Year’s Eve in 2015local and federal agents arrested a 26-year-old man in Rochester, N.Y., for planning to attack people at random later that night using knives and a machete. Just before his capture Emanuel L. Lutchman had made a video—to be posted to social media following the attack—in which he pledged his allegiance to the Islamic State in Iraq and Syria, or ISIS. Lutchman would later say a key source of inspiration for his plot came from similar videos, posted and shared across social media and Web sites in support of the Islamic State and “violent jihad.”

Lutchman—now serving a 20-year prison sentence—was especially captivated by videos of Anwar al-Awlaki, a U.S.-born cleric and al Qaeda recruiter in Yemen. Al-Awlaki’s vitriolic online sermons are likewise blamed for inspiring the 2013 Boston Marathon bombers and several other prominent terrorist attacks in the U.S. and Europe over the past 15 years. Officials had been monitoring Lutchman’s activities days before his arrest and moved in immediately after he finished making his video—which was never posted, and ironically ended up serving as evidence of his intention to commit several crimes.

But al-Awlaki’s videos continue to motivate impressionable young people more than five years after the cleric—dubbed the “bin Laden of the internet”—died in a U.S. drone strike. Facebook, Twitter and Google’s YouTube have been scrambling to remove terrorist propaganda videos and shut down accounts linked to ISIS and some other violent groups. The footage has been copied and shared so many times, however, that it remains widely available on those sites to digitally savvy and extremism-prone millennials like Lutchman and Omar Mir Seddique Mateen. The latter pledged allegiance to ISIS on social media before murdering 49 people in Orlando’s Pulse Nightclub last June.

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

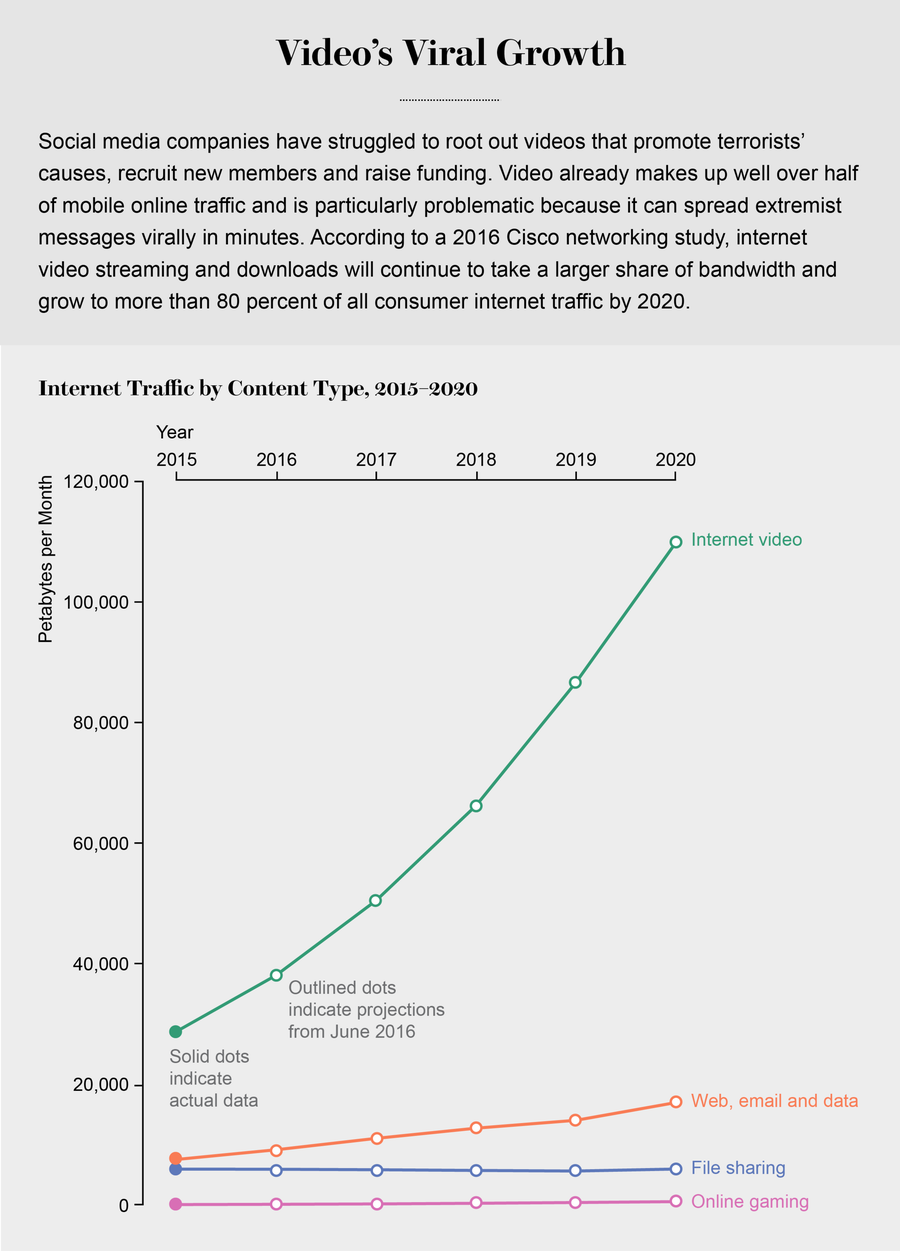

Social media companies have long used sophisticated algorithms to mine users’ words, images, videos and location data to improve search results and to finely target advertising. But efforts to apply similar technology to root out videos that promote terrorists’ causes, recruit new members and raise funding have been less successful. Video, which makes up well over half of mobile online traffic, is particularly problematic because it can spread extremists’ messages virally in minutes, is difficult to track and even harder to eliminate. Despite these high-profile challenges, Facebook, Google and Twitter face a growing backlash—including advertiser boycotts and lawsuits—pushing them to deal more effectively with the darker elements of the platforms they have created. New video “fingerprinting” technologies are emerging that promise to flag extremist videos as soon as they are posted. Big questions remain, however: Will these tools work well enough to keep terrorist videos from proliferating on social media? And will the companies that have enabled such propaganda embrace them?

Credit: Amanda Montañez; Source: Cisco Visual Networking Index, 2016

Social Media’s ISIS Problem

ISIS has a well-established playbook for using social media and other online channels to attract new recruits and encourage them to act on the terrorist group’s behalf, according to J. M. Berger, a former nonresident fellow in The Brookings Institution’s project U.S. Relations with the Islamic World. “The average age of an [ISIS] recruit is about 26,” says Seamus Hughes, deputy director of The George Washington University’s Program on Extremism. “These young people aren’t learning how to use social media—they already know it because they grew up with it.”

ISIS videos became such a staple on YouTube a few years ago that the site’s automated advertising algorithms were inserting advertisements for Procter & Gamble, Toyota and Anheuser–Busch in front of videos associated with the terrorist group. Despite assurances at the time that Google was removing the ads and in some cases the videos themselves, the problem is far from solved. In March Google’s president of its EMEA (Europe, Middle East and Africa) business and operations, Matthew Brittin, apologized to large advertisers—including Audi, Marks and Spencer, and McDonald’s U.K.—who had pulled their online ads after discovering they had appeared alongside content from terrorist groups and white supremacists. AT&T, Johnson & Johnson and other major U.S. advertisers have boycotted YouTube for the same reason.

Whether Google had turned a blind eye to such content is a matter of debate, primarily between the company and the Counter Extremism Project (CEP), a nonprofit nongovernmental organization formed in 2014 to monitor and report on the activities of terrorist groups and their supporters. More certain is the fact that on Google’s sprawling YouTube network there is no easy way to find and take down all or even most of the video that crosses the line from being merely provocative to a full-on call for violence and hatred. Social media sites including Facebook, Google and Twitter have for the past few years used Microsoft’s PhotoDNA software with some success to automatically identify still images they might want to remove—but there has been no comparable tool for video. Social media sites instead rely mostly on their large user bases to report videos that might violate the companies’ policies against posting terrorist propaganda. Facebook CEO Mark Zuckerberg’s recent promise to add 3,000 more reviewers to help better monitor video posted to the site only highlights the magnitude of the problem. As Twitter noted in at least two separate blog posts last year, there is no “magic algorithm” for identifying terrorist rhetoric and recruitment efforts on the internet.

Video Signatures

That argument might not hold much longer. A key researcher behind PhotoDNA says he is developing software called eGLYPH, which applies PhotoDNA’s basic principles to identify video promoting terrorism—even if the clips have been edited, copied or otherwise altered.

Large video files create an “imposing problem” for search tools because, depending on the quality, they might consist of 24 or 30 different images per second, says Hany Farid, a Dartmouth College computer science professor who helped Microsoft develop PhotoDNA nearly a decade ago to crack down on online child pornography. Software analyzing individual images in video files would be impractical because of the computer processing power required, Farid says. He has been working for more than a year to develop eGLYPH’s algorithm, which analyzes video, image and audio files, and creates a unique signature—called a “hash”—that can be used to identify either an entire video or specific scenes within a video. The software can compare hashes of new video clips posted online against signatures in a database of content known to promote terrorism. If a match is found, that new content could be taken down automatically or flagged for a human to review.

One of eGLYPH’s advantages is that it quickly creates a signature for a video without using lots of computing power, because it analyzes changes between frames rather than the entire image in each frame. “If you take a video of a person talking, much of the content is the same [from frame to frame],” Farid says. The idea is to “boil a video down to its essence,” taking note only when there is a significant change between frames—a new person enters the picture or the camera pans to a focus on something new. “We take these videos and try to remove as much of the redundancy in the data as possible and extract the signature from what’s left,” he says.

Farid’s partner on eGLYPH is the CEP, which has built up a vast repository of hashed images, audio files and video that “contains the worst of the worst—content that few people would disagree represented acts of terrorism, extremist propaganda and recruiting materials,” says Mark Wallace, the CEP’s chief executive officer and a former U.S. ambassador. Video clips the CEP shared with Scientific American from its database featured executions—several by beheading—as well as bombings and other violent acts. eGLYPH compares the signatures it generates with those stored in the CEP database. This is similar in principle to PhotoDNA, which was developed to create and match signatures of images posted to the Web with those in The National Center for Missing & Exploited Children’s database of images the organization has labeled as child pornography.

Thanks, but No Thanks

The CEP has offered free access to both eGLYPH and the organization’s database to social media outlets trying to keep up with terrorism-related video posts. Facebook, Twitter and YouTube spurned the CEP’s proposal, however, instead announcing in December plans to develop their own shared database of hashed terrorism videos. Even Microsoft, which provided funding for Farid’s work on eGLYPH, opted to join the social media consortium and has been ambiguous about its ongoing commitment to eGLYPH.

Each of the social media companies says it will create its own database of signatures identifying videos and images depicting the “most extreme and egregious terrorist images and videos” they have removed from their services, according a joint statement the companies issued December 5. The companies have not revealed many details about their project such as how they will hash new videos posted to their platforms or how they will compare those signatures—which they call “fingerprints”—to hashed videos stored in their databases. Each company will decide independently whether to remove flagged videos from its site and apps, based on the company’s individual content-posting policies. The companies responded to Scientific American’s interview requests by referring to their December press release and other materials posted to their Web sites.

One reason for the companies’ fragmented approach to purging videos that support or incite terrorism is the lack of a universal definition of “terrorist” or “extremist” content—social media companies are unlikely to want to rely solely on the judgment of the CEP or their peers. Microsoft’s policy, for example, is to remove from its Azure Cloud hosting and other services any content that is produced by or in support of organizations on the Consolidated United Nations Security Council Sanctions List, which includes al-Awlaki and many ISIS members.

At Facebook, the more times users report content that might violate the company’s rules, the more likely it is to get the company’s attention, Joaquin Candela, the company’s director of applied machine learning, said at a Facebook artificial intelligence event in New York City in February. Candela did not comment specifically on the project announced in December but did say Facebook is developing AI algorithms that can analyze video posts for content that violates the company’s Community Standards. One of the main challenges to creating the algorithm is the tremendous volume of posts Facebook receives each day. Last year Facebook users worldwide watched 100 million hours of video daily, according to the company. Another challenge is tuning the algorithm so it can accurately identify content that should be taken down without falsely flagging images and video that comply with Facebook’s rules.

Twitter initially relied solely on its users to spot tweets—among the hundreds of millions posted daily—that might violate the company’s content-posting rules. Mounting criticism over Twitter’s seeming inability to control terrorism-related tweets, however, prompted the company to be more proactive. During the second half of 2016 alone Twitter suspended 376,890 accounts “for violations related to promotion of terrorism,” according to the company’s March “Transparency Report.” Nearly three quarters of the accounts suspended were discovered by Twitter’s spam-fighting tools, according to the company.

YouTube’s Community Guidelines prohibit video intended to “recruit for terrorist organizations, incite violence, celebrate terrorist attacks or otherwise promote acts of terrorism.” The site does, however, allow users to post videos “intended to document events connected to terrorist acts or news reporting on terrorist activities” as long as those clips include “sufficient context and intent.” That thin line creates a predicament for any software designed to automatically find prohibited footage, which might explain why YouTube relies mostly on visitors to its site to flag inappropriate content. YouTube announced in November 2010 it had removed hundreds of videos featuring al-Awlaki’s calls for violence, although many of his speeches are still available on the site—the CEP in February this year counted 71,400 videos carrying some portion of his rhetoric.

Social media companies have begun to buy into the idea that they need to do more to keep terrorist content off of their sites. They are increasingly adding bans on video prohibited by YouTube’s Community Guidelines. “Some of this has to do with corporate responsibility, but the vast majority probably has to do with public relations,” says Hughes at the Program on Extremism. To be fair, ISIS’s efforts to hijack social media have created philosophical and technological problems the companies likely had not anticipated when building their digital platforms for connecting friends and posting random thoughts, he says. “There’s been a learning curve. It takes a while to see how messages spread online and figure out whose accounts should be taken down and how to do it.”

The Case(s) against Social Media

Three lawsuits filed against the major social media companies over the past year have sought to accelerate that learning curve. The lawsuits cover Mateen’s Pulse Nightclub attack as well as the November 2015 Paris and December 2015 San Bernadino, Calif., (pdf) mass shootings. The suits allege Facebook, Google and Twitter “knowingly and recklessly” provided ISIS with the ability to use their social networks as “a tool for spreading extremist propaganda, raising funds and attracting new recruits.”

Excolo Law—the Southfield, Mich., law firm that filed the suits on behalf of terrorist victims’ families—cites specific instances of attackers using social media before and even during the attacks. Between the time husband and wife Syed Rizwan Farook and Tashfeen Malik killed 14 people and injured 22 in San Bernardino and were themselves gunned down by law enforcement, Malik had posted a video to Facebook pledging her allegiance to ISIS, according to a suit filed with the U.S. District Court for the Central District of California. “If they put one tenth of a percent of the effort they put into targeted advertising towards trying to keep terrorists from using their sites, we wouldn’t be having this discussion,” says Keith Altman, lead counsel at Excolo Law.

Scientific American contacted Facebook, Google and Twitter for comment about the lawsuits. Only Facebook replied, stating, “We sympathize with the victims and their families. Our Community Standards make clear that there is no place on Facebook for groups that engage in terrorist activity or for content that expresses support for such activity, and we take swift action to remove this content when it’s reported to us.”

A recurring criticism against the social media companies is that they have been too slow to react to the dangerous content festering on their sites. “In 2015 we started to engage with social media companies to say, ‘There’s a problem and we think you should take some concrete steps to address it,’” says CEP executive director David Ibsen. “And we got not the most enthusiastic response from them. A lot of the pushback was that there wasn’t a technological solution, the problem is too big, free speech concerns, it goes against our ethos of free expression—all those different things.” The CEP challenged those excuses by turning to Farid. “He said there was a technological solution to the problem of removing extremist content from the internet, and that he had been working on it,” Ibsen says. “That was one of the first times you had a recognized computer engineer saying that what the tech companies were saying wasn’t true, that there is a technology solution.”

Unsolved Problems

Regardless of whether the CEP and social media companies come together, the technology they are developing would not prevent ISIS or its supporters from live-streaming video of an attack or propaganda speech on services such as Facebook Live or Twitter’s Periscope. Such video would have no matching signature in a database, and any attempt to flag a new video would need an algorithm that defines “terrorism,” so it knows what to look for, Farid says. “I know it when I see it,” he explains. “But I don’t know how to define it for a software program in a way that doesn’t trigger massive amounts of false alarms.” Algorithms that might automatically identify faces of well-known ISIS members in video work “reasonably well” but are not nearly accurate or fast enough to keep up with massive amounts of video streamed online, he says, adding: “I don’t see that being solved in the next decade at the internet scale.” Facebook’s inability to keep people from posting live footage of murders and suicides via Facebook Live underscores Farid’s point.

Even if the major social media companies continue to keep eGLYPH and the CEP at arm’s length, Wallace is hopeful internet service providers and smaller social media companies might still be interested in the technology. “Regardless, we hope it is a tool that drives people to confront the issue,” he says.

Farid acknowledges efforts to scale back ISIS’s use of social media are only part of a much larger battle that has many components—socioeconomic, political, religious and historic. “I’ve heard people say this [software] isn’t going to do anything,” he says. “My response: if your bar for trying to solve a problem is, ‘Either I solve the problem in its entirety or I do nothing,’ then we will be absolutely crippled in terms of progress on any front.”