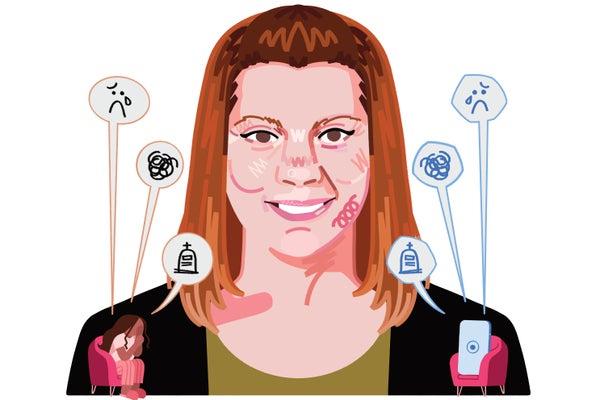

Artificial-intelligence chatbots don’t judge. Tell them the most private, vulnerable details of your life, and most of them will validate you and possibly even provide advice. For this reason, many people are turning to applications such as OpenAI’s ChatGPT for life guidance.

But AI “therapy” comes with significant risks. In late July, OpenAI CEO Sam Altman warned ChatGPT users against using the chatbot as a “therapist” because of privacy concerns. The American Psychological Association (APA) has claimed that AI chatbot companies and their products are using “deceptive practices” by “passing themselves off as trained mental health providers.” It has called on the Federal Trade Commission to investigate them, citing two ongoing lawsuits in which parents alleged that chatbots brought harm to their children. In some of these high-profile cases, parents allege that their child committed suicide following conversations with an AI.

“What stands out to me is just how humanlike it sounds,” says C. Vaile Wright, a licensed psychologist and senior director of the APA’s Office of Health Care Innovation, which focuses on the safe and effective use of technology in mental health care. “The level of sophistication of the technology, even relative to six to 12 months ago, is pretty staggering. And I can appreciate how people kind of fall down a rabbit hole.”

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

Scientific American spoke with Wright about how AI chatbots used for therapy could potentially be dangerous and whether it is possible to engineer one that is reliably both helpful and safe.

An edited transcript of the interview follows.

What have you seen happening with AI in the world of mental health care in the past few years?

I think we’ve seen two major trends. One is AI products geared toward providers, and those are primarily administrative tools to help you with your therapy notes and your claims. The other major trend is people seeking help from direct-to-consumer chatbots. And not all chatbots are the same, right? You have some chatbots that are developed specifically to provide emotional support to individuals, and that’s how they’re marketed. Then you have these more generalist chatbot offerings, such as ChatGPT, that were not designed for mental health purposes but that we know are being used for that purpose.

What concerns do you have about this trend?

We have a lot of concern when individuals use chatbots as if they were therapists. Not only were these tools not designed to address mental health or emotional support, but they’re actually being coded in a way to keep you on the platform for as long as possible because that’s the business model. And the way they do that is by being unconditionally validating and reinforcing, almost to the point of sycophancy.

The problem with that is that if you are a vulnerable person coming to these chatbots for help, and you’re expressing harmful or unhealthy thoughts or behaviors, the chatbot’s just going to reinforce you to continue to do that. Whereas, as a therapist, although I might be validating, I know it’s my job to point out when you’re engaging in unhealthy or harmful thoughts and behaviors and to help you address that pattern by changing it.

In addition, what’s even more troubling is when these chatbots actually refer to themselves as therapists or psychologists. It’s pretty scary because they can sound very convincing and like they are legitimate—when of course, they’re not.

Some of these apps explicitly market themselves as “AI therapy” even though they’re not licensed therapy providers. Are they allowed to do that?

A lot of these apps are really operating in a gray space. The rule is that if you make claims that you treat or cure any kind of mental disorder or mental illness, then you should be regulated by the U.S. Food and Drug Administration. But many of these apps will essentially say in their fine print, “We do not treat or provide an intervention for mental health conditions.”

Because they’re marketing themselves as a direct-to-consumer wellness app, they don’t fall under FDA oversight, which would require them to demonstrate at least a minimal level of safety and effectiveness. These wellness apps have no responsibility to do either.

Keeproll/Getty Images

What are some of the main privacy risks?

These chatbots have absolutely no legal obligation to protect your information at all. So not only could your chat logs be subpoenaed, but in the case of a data breach, do you really want these chats with a chatbot available for everybody? Do you want your boss, for example, to know you are talking to a chatbot about your alcohol use? I don’t think people are always aware that they’re putting themselves at risk by putting their information out there.

The difference with the therapist is: sure, I might get subpoenaed, but I do have to operate under the Health Insurance Portability and Accountability Act, or HIPAA, and other types of confidentiality laws as part of my ethics code.

You mentioned that some people might be more vulnerable to harm than others. Who is most at risk?

Certainly younger individuals such as teenagers and children. That’s in part because developmentally, they just haven’t matured as much as older adults. They may be less likely to trust their gut when something doesn’t feel right. Some data have suggested that not only are young people more comfortable with these technologies, but they say they trust them more than people because they feel less judged by them. I think anybody who is emotionally or physically isolated or has preexisting mental health challenges is at greater risk as well.

What do you think is driving more people to seek help from chatbots?

It’s very human to want to seek out answers about what’s bothering us. In some ways, chatbots are just the next iteration of a tool for us to do that. Before, it was Google and the Internet. Before that, it was self-help books. But it’s complicated by the fact that we do have a broken system where, for many reasons, it’s very challenging to access mental health care. That’s in part because there is a shortage of providers. We also hear from providers that they are disincentivized from taking insurance, which, again, reduces access. Technologies need to play a role in helping to address access to care. We just have to make sure it’s safe and effective and responsible.

What are some ways it could be made safe and responsible?

In the absence of companies doing it on their own—which is not likely, although they have made some changes, to be sure—the APA’s preference would be legislation at the federal level. That regulation could include protection of confidential personal information, some restrictions on advertising, minimizing addictive coding tactics, and specific audit and disclosure requirements. For example, companies could be required to report the number of times suicidal ideation was detected and any known attempts or completions. And certainly we would want legislation that would prevent the misrepresentation of psychological services, so companies wouldn’t be able to call a chatbot a psychologist or a therapist.

How could an idealized, safe version of this technology help people?

The two most common use cases that I think of are, one, let’s say it’s two in the morning, and you’re on the verge of a panic attack. Even if you’re in therapy, you’re not going to be able to reach your therapist. So, what if there were a chatbot that could remind you of the tools to help you calm down and adjust your panic before it gets too bad?

The other use that we hear a lot about is using chatbots as a way to practice social skills, particularly for younger individuals. So, you want to approach new friends at school, but you don’t know what to say. Can you practice on this chatbot? Then, ideally, you take that practice, and you use it in real life.

It seems like there is a tension in trying to build a safe chatbot to provide mental help to someone: the more flexible and less scripted you make it, the less control you have over the output, and the higher the risk that it says something that causes harm.

I agree. I think there absolutely is a tension there. I think part of what makes the AI chatbots the go-to choice for people over well-developed wellness apps to address mental health is that they are so engaging. They really do feel like they are providing this interactive back-and-forth, whereas some of these other apps’ engagement is often very low. The majority of people who download mental health apps use them once and abandon them. We’re clearly seeing much more engagement with AI chatbots such as ChatGPT.

I look forward to a future where you have a mental health chatbot that is rooted in psychological science, has been rigorously tested and was co-created with experts. It would be built for the purpose of addressing mental health, and therefore it would be regulated, ideally by the FDA. For example, there’s a chatbot called Therabot that was developed by researchers at Dartmouth College. It’s not what’s on the commercial market right now, but I think there is a future in that.

IF YOU NEED HELP

If you or someone you know is struggling or having thoughts of suicide, help is available. Call or text the 988 Suicide & Crisis Lifeline at 988 or use the online Lifeline Chat.