Sarah Vitak: This is Scientific American’s 60 Second Science. I’m Sarah Vitak.

Early last year a TikTok of Tom Cruise doing a magic trick went viral.

[CLIP: Deepfake of Tom Cruise says, “I’m going to show you some magic. It’s the real thing. I mean, it’s all the real thing.”]

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

Vitak: Only, it wasn’t the real thing. It wasn’t really Tom Cruise at all. It was a deepfake.

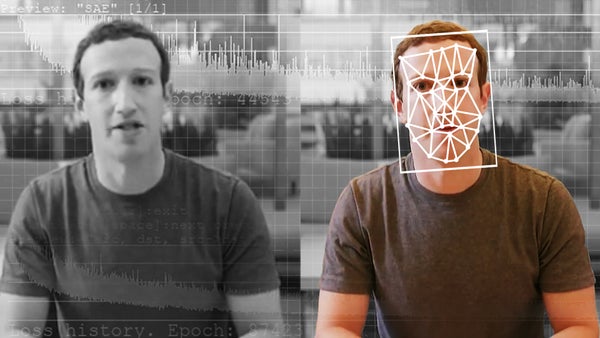

Matt Groh: A deepfake is a video where an individual's face has been altered by a neural network to make an individual do or say something that the individual has not done or said.

Vitak: That is Matt Groh, a Ph.D. student and researcher at the M.I.T. Media Lab. (Just a bit of full disclosure here: I worked at the Media Lab for a few years, and I know Matt and one of the other authors on this research.)

Groh: It seems like there’s a lot of anxiety and a lot of worry about deepfakes and our inability to, you know, know the difference between real or fake.

Vitak: But he points out that the videos posted on the Deep Tom Cruise account aren’t your standard deepfakes.

The creator, Chris Umé, went back and edited individual frames by hand to remove any mistakes or flaws left behind by the algorithm. It takes him about 24 hours of work for each 30-second clip. It makes the videos look eerily realistic. But without that human touch, a lot of flaws show up in algorithmically generated deepfake videos.

Being able to discern between deepfakes and real videos is something that social media platforms in particular are really concerned about as they need to figure out how to moderate and filter this content.

You might think, “Okay, well, if the videos are generated by an AI, can’t we just have an AI that detects them as well?”

Groh: The answer is kind of yes but kind of no. And so I can go—you want me to go into, like, why that? Okay, cool. So the reason why it’s kind of difficult to predict whether video has been manipulated or not is because it’s actually a fairly complex task. And so AI is getting really good at a lot of specific tasks that have lots of constraints to them. And so AI is fantastic at chess. AI is fantastic at Go. AI is really good at a lot of different medical diagnoses, not all, but some specific medical diagnoses, AI is really good at. But video has a lot of different dimensions to it.

Vitak: But a human face isn’t as simple as a game board or a clump of abnormally growing cells. It’s three-dimensional, varied. Its features create morphing patterns of shadow and brightness. And it’s rarely at rest.

Groh: And sometimes you can have a more static situation, where one person is looking directly at the camera, and much stuff is not changing. But a lot of times people are walking. Maybe there’s multiple people. People’s heads are turning.

Vitak: In 2020 Meta (formerly Facebook) held a competition where they asked people to submit deepfake detection algorithms. The algorithms were tested on a “holdout set,” which was a mixture of real videos and deepfake videos that fit some important criteria.

Groh: So all these videos are 10 seconds. And all these videos show actors, unknown actors, people who are not famous in nondescript settings, saying something that’s not so important. And the reason I bring that up is because it means that we’re focusing on just the visual manipulations. So we’re not focusing on “Do”—like, “Do you know something about this politician or this actor?” and, like, “That’s not what they would have said. That's not like their belief” or something. “Is this, like, kind of crazy?” We’re not focusing on those kinds of questions.

Vitak: The competition had a cash prize of $1 million that was split between top teams. The winning algorithm was only able to get 65 percent accuracy.

Groh: That means that 65 out of 100 videos, it predicted correctly. But it’s a binary prediction. It’s either deepfake or not. And that means it’s not that far off from 50–50. And so the question then we had was, “Well, how well would humans do, relative to this best AI on this holdout set?”

Groh and his team had a hunch that humans might be uniquely suited to detect deepfakes—in large part because all deepfakes are videos of faces.

Groh: People are really good at recognizing faces. Just think about how many faces you see every day. Maybe not that much in the pandemic, but generally speaking, you see a lot of faces. And it turns out that we actually have a special part in our brains for facial recognition. It’s called the fusiform face area. And not only do we have this special part in our brain, but babies are even—like, have proclivities to faces versus nonface objects.

Vitak: Because deepfakes themselves are so new (the term was coined in late 2017) most of the research so far around spotting deepfakes in the wild has really been about developing detection algorithms: programs that can, for instance, detect visual or audio artifacts left by the machine-learning methods that generate deepfakes. There is far less research on human’s ability to detect deepfakes. There are several reasons for this, but chief among them is that designing this kind of experiment for humans is challenging and expensive. Most studies that ask humans to do computer-based tasks use crowdsourcing platforms that pay people for their time. It gets expensive very quickly.

The group did do a pilot with paid participants but ultimately came up with a creative, out-of-the-box solution to gather data.

Groh: The way that we actually got a lot of observations was hosting this online and making this publicly available to anyone. And so there’s a Web site, detectfakes.media.mit.edu, where we hosted it, and it was just totally available and there were some articles about this experiment when we launched it. And so we got a little bit of buzz from people talking about it; we tweeted about this. And then we made this. It’s kind of high on the Google search results when you’re looking for deepfake detection and just curious about this thing. And so we actually had about 1,000 people a month come visit the site.

Vitak: They started with putting two videos side by side and asking people to say which was a deepfake.

Groh: And it turns out that people are pretty good at that, about 80 percent on average. And then the question was “Okay, so they’re significantly better than the algorithm on this side-by-side task. But what about a harder task, where you just show a single video?”

Vitak: Compared on an individual basis with the videos they used for the test, the algorithm was slightly better. People were correctly identifying deepfakes around 66 to 72 percent of the time,whereas the top algorithm was getting 80 percent.

Groh: Now, that’s one way. But another way to evaluate the comparison—and a way that makes more sense for how you would design systems for flagging misinformation and deepfakes—is crowdsourcing. And so there’s a long history that shows when people are not amazing at a particular task or when people have different experiences and different expertise, when you aggregate their decisions along a certain question, you actually do better than the individuals by themselves.

Vitak: And they found that the crowdsourced results actually had very similar accuracy rates to the best algorithm.

Groh: And now there are differences again, because it depends what videos we’re talking about. And it turns out that, on some of the videos that were a bit more blurry and dark and grainy, that’s where the AI did a little bit better than people. And, you know, it kind of makes sense that people just didn’t have enough information, whereas there’s the visual information that was encoded in the AI algorithm. And, like, graininess isn’t something that necessarily matters so much, they just—the AI algorithm sees the manipulation, whereas the people are looking for something that deviates from your normal experience when looking at someone—and when it’s blurry and grainy and dark—your experience already deviates. So it’s really hard to tell. But the thing is, actually, the AI was not so good on some things that people were good on.

Vitak: One of those things that people were better at was videos with multiple people. And that is probably because the AI was “trained” on videos that only had one person.

And another thing that people were much better at was identifying deepfakes when the videos contained famous people doing outlandish things. (Another thing that the model was not trained on). They used some videos of Vladimir Putin and Kim Jong-un making provocative statements.

Groh: And it turns out that when you run the AI model on either the Vladimir Putin video or the Kim Jong-un video, the AI model says it’s essentially very, very low likelihood that’s a deepfake. But these were deepfakes. And they are obvious to people that they were deepfakes or at least obvious to a lot of people. Over 50 percent of people were saying, “This is, you know, this is a deepfake.”

Vitak: Lastly, they also wanted to experiment with trying to see if the AI predictions could be used to help people make better guesses about whether something was a deepfake or not.

So the way they did this was they had people make a prediction about a video. Then they told people what the algorithm predicted, along with a percentage of how confident the algorithm was. Then they gave people the option to change their answers. And amazingly, this system was more accurate than either humans alone or the algorithm alone. But, on the downside, sometimes the algorithm would sway people’s responses incorrectly.

Groh: And so not everyone adjusts their answer. But it's quite frequent that people do adjust their answer. And in fact, we see that when the AI is right, which is the majority of the time, people do better also. But the problem is that when the AI is wrong, people are doing worse.

Vitak: Groh sees this as a problem in part with the way the AI’s prediction is presented.

Groh: So when you present it as simply a prediction, the AI predicts 2 percent likelihood, then, you know, people don’t have any way to introspect what’s going on, and they’re just like, “Oh, okay, like, the eyes thinks it’s real, but, like, I thought it was fake. But I guess, like, I’m not really sure. So I guess I’ll just go with it.” But the problem is that that’s not how, like, we have conversations as people. Like, if you and I were trying to assess, you know, whether this is a deepfake or not, I might say, “Oh, like, did you notice the eyes? Those don’t really look right to me,” and you’re like, “Oh, no, no, like, that—that person has, like, just, like, brighter green eyes than normal. But that’s totally cool.” But in the deepfake, like, you know, AI collaboration space, you just don’t have this interaction with the AI. And so one of the things that we would suggest for future development of these systems is trying to figure out ways to explain why the AI is making a decision.

Vitak: Groh has several ideas in mind for how you might design a system for collaboration that also allows the human participants to better utilize the information they get from the AI.

Ultimately, Groh is relatively optimistic about finding ways to sort and flag deepfakes—and also about how influential deepfakes of false events will be.

Groh: And so a lot of people know “Seeing is believing.” What a lot of people don’t know is that that’s only half the aphorism. The second half of aphorism goes like this: “Seeing is believing. But feeling is the truth.” And feeling does not refer to emotions there. It’s experience. When you’re experiencing something, you have all the different dimensions that’s, you know, of what’s going on. When you’re just seeing something, you have one of the many dimensions. And so this is just to get up this idea that, you know, that that seeing is believing to some degree. But we also have to caveat it with: there’s other things beyond just our visual senses that help us identify what’s real and what’s fake.

Vitak: Thanks for listening. For Scientific American’s 60 Second Science, I’m Sarah Vitak.

[The above text is a transcript of this podcast.]