You have probably encountered the base rate fallacy, and it probably fooled you. Part mathematical paradox and part cognitive bias, this mental oversight has surprisingly forceful things to say about many real-world situations, from our public health policy to mass-surveillance programs. Take, for instance, the following two puzzles. The first comes from the late psychologist Daniel Kahneman’s popular science book Thinking, Fast and Slow:

An individual has been described by a neighbor as follows: “Steve is very shy and withdrawn, invariably helpful but with little interest in people or in the world of reality. A meek and tidy soul, he has a need for order and structure, and a passion for detail.” Is Steve more likely to be a librarian or a farmer?

When asked this question in an experiment, Kahneman wrote, most people say Steve is more likely to be a librarian. They reason that Steve’s personality accords more with stereotypes of librarians than with those of farmers. But they ignore a highly pertinent statistical detail: farmers outnumber librarian professionals in the U.S. by more than 11 to one. A description of somebody’s personality shouldn’t override the vast size differences of the employment populations in question. With such a preponderance of farmers, we should expect that plenty of them have a passion for detail. This statistical bias becomes more obvious when the career possibilities have a starker difference in population sizes: Steve loves astronomy. Is he more likely to be a banker or an astronaut?

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

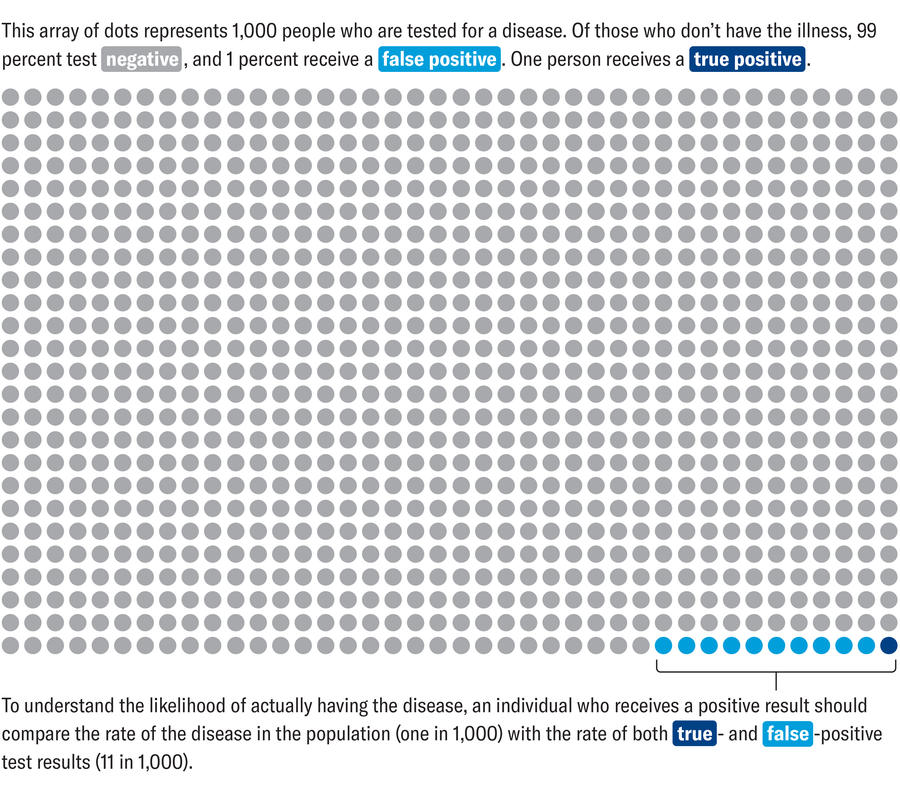

Puzzle number two gets more quantitative. Let’s say a doctor randomly decides to administer a blood test on you to scan for a certain disease that affects one in 1,000 people. The test is remarkably effective: it never gives a false negative, which means that if you have the disease, the test will detect it. False positives can happen but are rare: if you don’t have the disease, the test will say as much 99 percent of the time. Your test comes back positive. With these rates in mind, what is the probability that you have the disease? After the Steve example, you might have your guard up, ready for a trick. Try to inhabit the situation. You just got a positive result on an exceptionally accurate medical test. How worried do you feel?

With the given parameters, the chance that you actually have the disease is only about 9 percent. Imagine we tested 1,000 people. We expect one person in that group to have the disease and get a true positive result. Of the remaining 999 tests, 1 percent will yield false positives. That rounds to 10 people. So we expect 11 positive tests: 10 false positives and one true positive. Your positive test is one of those 11. What are the chances that you are the unfortunate one?

Amanda Montañez

These puzzles showcase the base rate fallacy; the second one is also an example of the false positive paradox. When people judge the likelihood of a scenario, they tend to overweight the specific details in front of them and underweight the general prevalence of that scenario. They overweight the description of Steve being “a meek and tidy soul” and neglect the prevalence of farmers relative to librarians. They overweight a positive result on a test that’s 99 percent accurate and disregard the rarity of the disease.

Of course, you should not dismiss medical tests; that’s not the lesson here. Instead the false positive paradox demonstrates that interpreting the results of medical tests and deciding when to administer them require statistical literacy. Typically doctors order tests when they have reason to believe you might have a condition. If we pick you at random for testing, then your pretest likelihood of having the disease is simply the prevalence in the general population. But if you walk into a clinic with a distinctive rash and a high fever, you have moved into a different statistical bucket. You are being compared no longer to the general public but to other people with those specific symptoms. In this smaller group, the disease is far more common, making a positive result more indicative of a true case.

This situation explains why we don’t conduct mass screenings for rare diseases. When a disease has a small-enough base rate in the population, even highly accurate tests will produce more false positives than true positives. The benefit of catching a few cases is outweighed by the medical, financial and psychological harm caused by a flurry of false positives.

Welsh police learned this lesson the hard way during the 2017 Union of European Football Associations Champions League final. They deployed cameras throughout Cardiff, where the soccer event was held, and used automated facial-recognition software to analyze the footage. The software scanned the faces of about 170,000 fans, hunting for any that matched persons of interest. The system flagged 2,470 potential criminals, of which 2,297 were false positives. The software wasn’t broken. It did what any system with a small chance of error does when applied indiscriminately. The case made national news and led to an ongoing legal battle in Wales over facial-recognition technology.

For similar reasons, any data-mining techniques used to catch would-be terrorists will fail, as security expert Bruce Schneier has written about more broadly. These programs scour phone records, location data and social networks in search of patterns that may indicate terrorist plots. The problem: terrorist plots don’t always have clearly identifiable warning signs (implying some chance of false positives), and most people aren’t terrorists (indicating a microscopic base rate in the population). Schneier’s back-of-the-envelope calculation suggests that for every real threat uncovered, tens of millions of false alarms could waste federal agents’ attention—with all of the associated expenses and liberty violations.

None of this implies that we should stop screening for rare events, but we should understand the trade-offs. Most fire alarms are false alarms, but they pose a small inconvenience in exchange for saving lives when disaster strikes. The base rate fallacy teaches us to contextualize false alarms and to stop conflating the accuracy of a test for an event with the probability of the event itself. It reminds us that when we are wading through knotty probabilistic questions, the most salient details may not be the most statistically relevant.